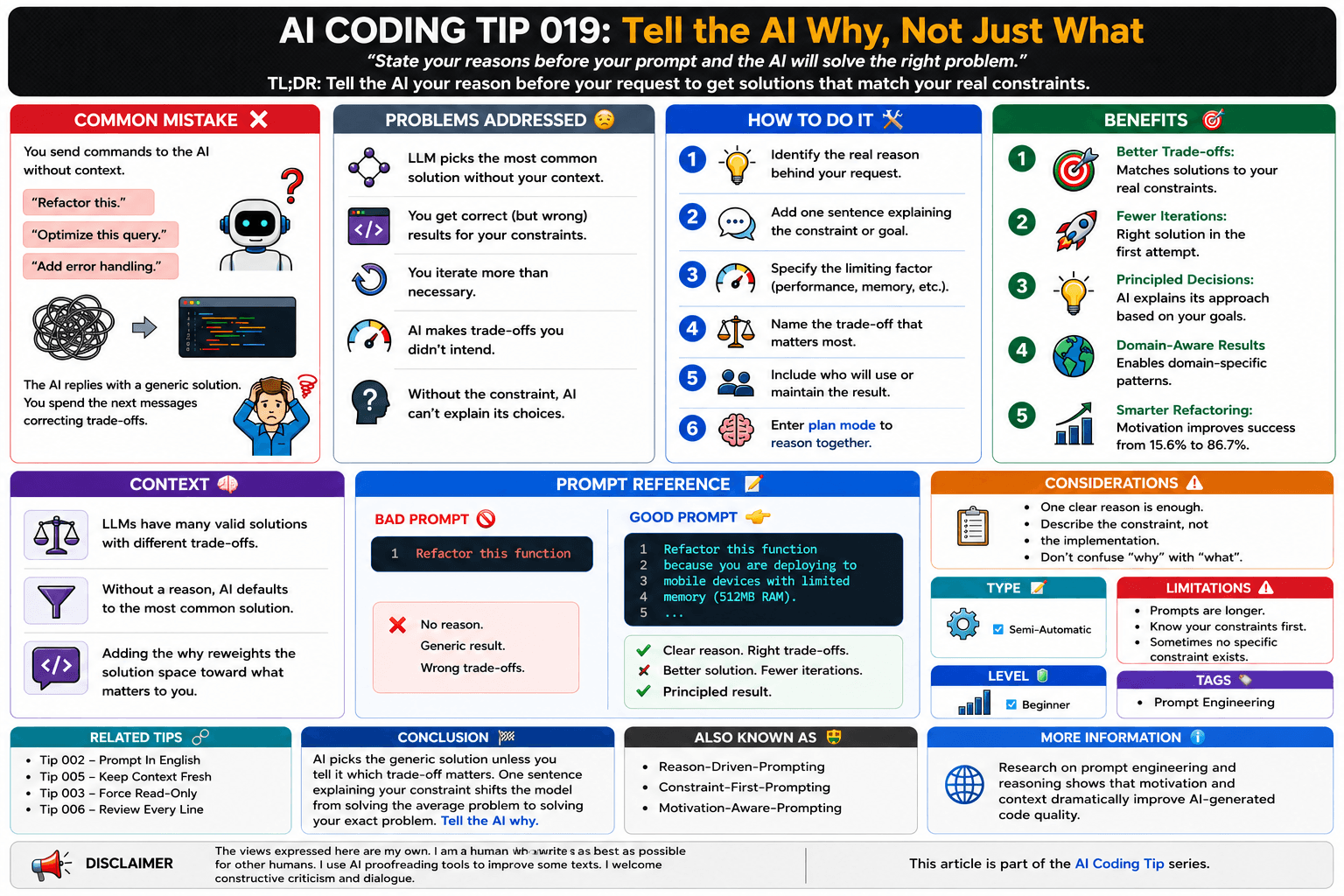

AI Coding Tip 019 - Tell the AI Why, Not Just What

State your reasons before your prompt and the AI will solve the right problem.

I’m a senior software engineer loving clean code, and declarative designs. S.O.L.I.D. and agile methodologies fan.

TL;DR: Tell the AI your reason before your request to get solutions that match your real constraints.

Common Mistake ❌

You send commands to the AI without context.

"Refactor this."

"Optimize this query."

"Add error handling."

The AI complies, but returns a generic (and probably hallucinated) solution that solves the average case, not your specific one.

You spend the next three messages correcting the trade-offs you never mentioned.

Problems Addressed 😔

The LLM has dozens of valid solutions for any request and picks the most statistically common one without your context.

You get correct (but wrong) results: technically valid code that doesn't fit your constraints.

You iterate more than necessary because the AI guessed wrong about what mattered.

The model makes trade-offs you didn't intend, favoring speed when you needed readability.

When the AI doesn't know the constraint, it can't explain why it chose a specific approach.

How to Do It 🛠️

Identify the real reason behind your request before you type it.

Add one sentence explaining the constraint or goal before your request.

Specify the limiting factor: performance, memory, audience, team skill level, or deadline.

Name the trade-off that matters most to you.

Include who will use or maintain the result.

Force yourself to enter plan mode to reason together.

Benefits 🎯

Better Trade-offs: The LLM navigates to solutions that match your actual constraints instead of the generic average.

Fewer Iterations: You get the right solution in the first attempt instead of correcting trade-offs after the fact.

Principled Decisions: The AI can explain why it chose a specific approach because it knows what you optimized for.

Domain-Aware Results: Context enables domain-specific patterns that generic requests never trigger.

Smarter Refactoring: Research shows that adding motivation to refactoring requests improves success from 15.6% to 86.7%.

Context 🧠

Why the Why Changes Everything

For any given coding problem, the LLM can give you dozens of valid solutions.

Each trades off different qualities: speed vs. memory, readability vs. performance, simplicity vs. flexibility.

Without a reason, the model defaults to the most statistically common solution from its training data.

That solution works for the generic case.

It almost never matches your specific constraints.

When you add the why, the model reweights the entire solution space toward what matters to you.

"Refactor this function" and "Refactor this function because you are deploying to edge devices with 512MB RAM" activate completely different reasoning paths.

The second prompt forces the model to consider memory-efficient algorithms, smaller data structures, and lazy loading patterns.

More precise advice like "never use ellipses because this will be read by text-to-speech" is better than just "never use ellipses."

The same principle applies to every coding request.

When you avoid comments, you document only design decisions.

This is another example of why you need to add the why.

Modern models have more reasoning, and you can check the approach, not just the result.

Prompt Reference 📝

Bad Prompt 🚫

Refactor this function

Good Prompt 👉

Refactor this function

because you are deploying to mobile devices

with limited memory (512MB RAM).

The trade-off that matters most

is memory usage over speed.

Many developers will maintain this code.

Keep it readable.

Avoid heavy data structures

or lazy-loading patterns

that require deep knowledge of the framework.

Considerations ⚠️

One clear reason is enough.

You don't need to write a long paragraph.

The why should describe the constraint, not the implementation.

Don't confuse "why" with "what".

Type 📝

[X] Semi-Automatic

Limitations ⚠️

Adding context makes your prompts longer.

You need to know your own constraints before you can explain them to the AI.

Some requests genuinely have no specific constraint.

In those cases, the generic solution is fine.

Level 🔋

[X] Beginner

Tags 🏷️

- Prompt Engineering

Related Tips 🔗

https://maxicontieri.substack.com/p/ai-coding-tip-002-prompt-in-english

https://maxicontieri.substack.com/p/ai-coding-tip-005-keep-context-fresh

https://maxicontieri.substack.com/p/ai-coding-tip-003-force-read-only

https://maxicontieri.substack.com/p/ai-coding-tip-006-review-every-line

Conclusion 🏁

Every coding problem has multiple valid solutions.

The AI picks the generic one unless you tell it which trade-off matters.

One sentence explaining your constraint shifts the model from solving the average problem to solving your exact problem.

Tell the AI why.

More Information ℹ️

Chain-of-Thought Prompting Elicits Reasoning in Large Language Models

Effective Context Engineering for AI Agents

Prompting Best Practices - Claude API Docs

LLM-Driven Code Refactoring: Opportunities and Limitations

Best Practices for Prompt Engineering with the OpenAI API

Also Known As 🎭

- Reason-Driven-Prompting

- Constraint-First-Prompting

- Motivation-Aware-Prompting

Disclaimer 📢

The views expressed here are my own.

I am a human who writes as best as possible for other humans.

I use AI proofreading tools to improve some texts.

I welcome constructive criticism and dialogue.

I shape these insights through 30 years in the software industry, 25 years of teaching, and writing over 500 articles and a book.

This article is part of the AI Coding Tip series.